Every seminar I do on Social Media for Research Impact ends up in the same place. Someone asks about AI. Not whether to use it — most people already do — but where the line is. I have been thinking about that question for a while, and I want to try to answer it properly here, because the answer extends well beyond social media.

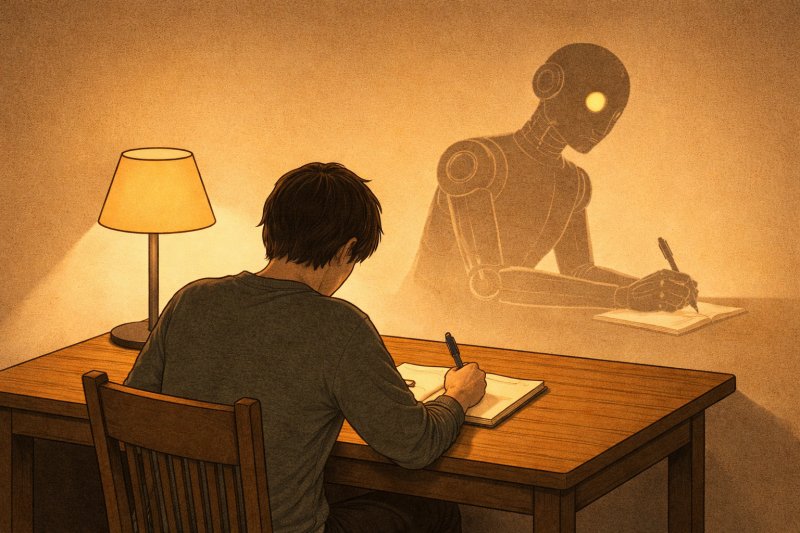

Start with LinkedIn. Feeds are increasingly full of posts that are polished, well-structured, and completely hollow. You read them and cannot find the human. The term for the worst of this is "AI slop," and it is spreading fast. Several colleagues have told me they check LinkedIn less now for exactly this reason: they no longer know who they are actually reading. I notice it too — and I will admit I have not always been immune to the temptation of just feeding a prompt into a chatbot and posting what comes back.

This matters a great deal if you care about the Personality element of the Researcher's Social Media Compass from my book: authenticity is not a nice extra, it is the whole point of being visible as a scholar. But the stakes are higher once you move from LinkedIn posts to papers, grant proposals, and PhD theses. So let me go there.

The cognitive cost is real

The research is starting to catch up with the intuition. A study by Bastani and colleagues, published in PNAS in 2025, found that AI tutoring improved student performance during practice — but when the AI was removed, those students underperformed relative to peers who had struggled without assistance (Bastani et al., 2025). The skill had not been acquired; the task had simply been completed. Separate work, presented at the CHI Conference, found that higher confidence in AI correlates with less critical thinking effort among knowledge workers (Lee et al., 2025). And a study published in Computers in Human Behavior found that LLMs reduce mental effort but at the cost of reasoning depth in student inquiry (Stadler et al., 2024). The pattern across these studies is consistent: routine outsourcing of cognitive effort atrophies exactly the skills being outsourced.

This is not a surprising finding if you have spent time supervising students. I have read several recent drafts that were, frankly, too good — grammatically clean, well-organized, somehow generic. The real test comes in the conversation. When someone cannot explain what they wrote, or cannot defend the argument they apparently made, the question answers itself. Did they write it? Not really.

The voice problem

Research on AI-assisted writing consistently finds the same dilemma: AI makes writing sound better and stops it sounding like you. A 2024 study published in Technology, Knowledge and Learning (Springer Nature) captures this precisely — one student noted that when ChatGPT replaced "in moderation" with "judiciously," it felt inauthentic, and they were right to notice (Cong & Gao, 2024). This experience of being "AI-nized" — having your voice replaced by a generic academic register — is documented across multiple studies. A 2025 study by Khuder at Chalmers University, published in Applied Linguistics, reinforces the underlying mechanism: suggestive feedback fosters voice and autonomy; directive intervention erodes both (Khuder, 2025). AI defaults toward directive. It rewrites rather than asks.

This is especially acute for non-native English speakers, where the fluency uplift is immediate and real — which also makes it the most seductive use case. The problem is that you are least able to detect when your own voice has been replaced by a smooth, generic academic register that could belong to anyone. Editing tools that focus on polish rather than generation are more defensible than full drafting, but the line between "improve my prose" and "write my argument" is blurring fast.

The systemic risk nobody is talking about enough

In general, this problem applies more broadly to teaching. Assignments arrive looking excellent: well-structured, nicely formatted, clearly argued. Then the presentation happens, and it becomes obvious that the student does not own the content. They can reproduce it but cannot extend it, question it, or connect it to something unexpected. That gap is a serious problem — not for the grade, but for what a PhD or a master's education is actually supposed to produce.

If a generation of junior scholars routinely uses AI to write their papers, we not only gradually lose the ability to assess whose ideas are whose, but we'll also see researchers reach mid-career without having developed an analytical voice of their own. A voice takes years to build through the friction of actually writing. You cannot shortcut that process and then reconstruct it later. My advice to any junior scholar is to develop your human intelligence first before you decide what to hand to the AI.

In my research on open innovation, the core insight is that "not all smart people work for you" — you have to reach outside for knowledge. But that has never meant outsourcing your entire strategy to someone else. You stay the decision-maker. The same logic applies here: AI can be a collaborator on expression. It cannot be the source of your ideas.

What responsible use actually looks like

I use AI tools myself — including, in part, to help draft this post — and I am transparent about it. But my rule is this: the argument has to exist before the AI touches it. If you could not have written it without the AI generating the substance, the ideas are not yours. Besides legal and ethical concerns, this is simply a practical reality.

Responsible AI use requires that primary ideas and interpretations remain yours, that you maintain competency in the underlying skills, and that you acknowledge use transparently. Not just because of reputation — though that matters — but because the skills are the career.

I am not against using AI at all. But do it in a smart way. For example, design your own custom AI that only asks you questions and never proposes text — an external coach rather than a ghostwriter. And that reminds me of advice my English teacher gave me at secondary school: if you look up a word in the dictionary and you don't recognize it, don't use it. The same applies to anything coming from AI. If it doesn't sound like you, or you wouldn't have said it yourself, leave it out.

For junior scholars, specifically

Write the first draft yourself. Always. Even when it is rough. Even when it takes three times as long. The first draft is not the output — it is the thinking. That is where the argument gets made, tested, revised, and owned. Delegate that process, and you have not written a paper — and you may never learn how to write one properly.

Use AI the way you might use a good mentor: ask it to push back on your argument, suggest a better structure, flag where you have been unclear. Then make those changes yourself. Don't fall for the trap of borrowed smartness — it looks good until someone asks you to explain it.

Finally, a word of caution on efficiency: if AI is making your writing dramatically faster, you are probably skipping the part that matters. The spelling checks go quicker, yes, but don't fall for the productivity trap. Good thinking still takes as long as it ever did — and there are no shortcuts to developing it, or recovering it once the habit of outsourcing is set.

(I wrote this roughly 1,000-word post using a prompt of about 2,000-words — which tells you something about the ratio of thinking to output, and why I think that ratio matters.)

This post was drafted with AI assistance. The arguments, observations, and opinions are my own.

Marcel Bogers is a Full Professor of Open & Collaborative Innovation at the Eindhoven University of Technology and a Research Fellow at the University of California, Berkeley.

He speaks, writes, and advises on how organizations can create and capture value through openness and collaboration.

Blog posts written with some help of AI! 🙂

Add comment

Comments